Detailed Description

As mentioned in the abstract, we tested with three different methods.

A. Buffering

Having a buffer in the receiver side: A buffer in Gstreamer by default stores one second worth of data. However, the Gstreamer buffer plays the packets in order of their timestamps. While the Gstreamer’s buffer removes latency and jitters, it cannot really stop the delay caused from different cameras having different encoder rates and different sources having different transmitting and network delays.

While the delay in the encoding rates can be taken care of with help of another buffer over the sender’s pipeline, the difference over network delays cannot be accommodated. We can observe in the video at the result section of this site on there is always a fixed latency between streams from both the cameras. We intentionally started one of the streams late. It is important to note that for every buffer or queue that is introduced in the pipeline, we increase the absolute latency of the streams.

Advantages: Really simple to implement, As the buffer size increases the relative latency decreases

Disadvantages: Not professional as storage is a concern and the absolute latency gets affected a lot. Even though it is the relative latency we are concerned about future application like the one mention in overview involving VANETS also expect a smaller absolute delay. In those cases, buffering is not the one stop solution.

Summary:

Streams Used: RTP

Latency observed: 1-3 seconds, 500 ms if streams are started together

Elements used: Gstreamer queue, rtpjitterbuffer

A. Buffering

Having a buffer in the receiver side: A buffer in Gstreamer by default stores one second worth of data. However, the Gstreamer buffer plays the packets in order of their timestamps. While the Gstreamer’s buffer removes latency and jitters, it cannot really stop the delay caused from different cameras having different encoder rates and different sources having different transmitting and network delays.

While the delay in the encoding rates can be taken care of with help of another buffer over the sender’s pipeline, the difference over network delays cannot be accommodated. We can observe in the video at the result section of this site on there is always a fixed latency between streams from both the cameras. We intentionally started one of the streams late. It is important to note that for every buffer or queue that is introduced in the pipeline, we increase the absolute latency of the streams.

Advantages: Really simple to implement, As the buffer size increases the relative latency decreases

Disadvantages: Not professional as storage is a concern and the absolute latency gets affected a lot. Even though it is the relative latency we are concerned about future application like the one mention in overview involving VANETS also expect a smaller absolute delay. In those cases, buffering is not the one stop solution.

Summary:

Streams Used: RTP

Latency observed: 1-3 seconds, 500 ms if streams are started together

Elements used: Gstreamer queue, rtpjitterbuffer

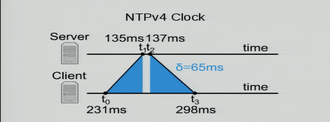

B. Using NTP clocks:

In this method we use the NTP clocks to synchronize the various devices in the system. To synchronize devices we need clocks. It is important to note that no two clocks in universe run at the same rate or show the same time. We approximately synchronize the devices involved to a common clock and make Gstreamer use that clock to synchronize media. As mentioned in the Gstreamer section, every pipeline has a master clock that is used to synchronize all the media going through the pipelines. They are used to generate the running time of the pipeline.

Idea employed: We use NTP clock that bases its internal time on observations from time on another machine in the network and the slave it to the local system clock.

For slaving one clock to another NTP estimates the relative clock rates between the two, calculates transmission delays of UDP messages sent and then offset is adjusted accordingly.

In this method we use the NTP clocks to synchronize the various devices in the system. To synchronize devices we need clocks. It is important to note that no two clocks in universe run at the same rate or show the same time. We approximately synchronize the devices involved to a common clock and make Gstreamer use that clock to synchronize media. As mentioned in the Gstreamer section, every pipeline has a master clock that is used to synchronize all the media going through the pipelines. They are used to generate the running time of the pipeline.

Idea employed: We use NTP clock that bases its internal time on observations from time on another machine in the network and the slave it to the local system clock.

For slaving one clock to another NTP estimates the relative clock rates between the two, calculates transmission delays of UDP messages sent and then offset is adjusted accordingly.

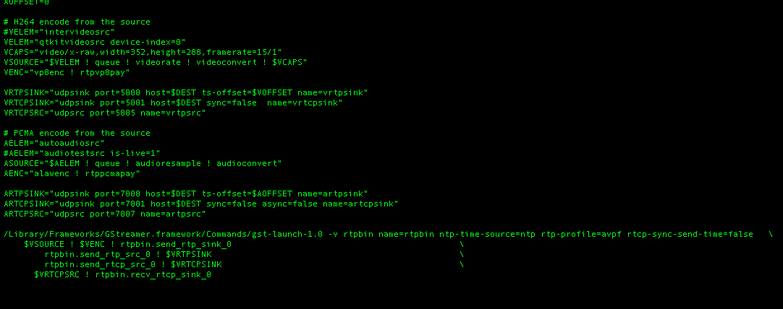

We also change the streams we are using for this method. While we only used RTP streams in our earlier method, now we are planning to use RTP streams along with RTCP streams.

RTP stream packets have a logical timestamp value for every packet and it is observed that the increase in timestamps is constant and fixed based on frame rate and clock frequency. In our case, the increase is 90,000Hz/15fps = 6000. Hence, the receiver can always predict the timestamp of the next packet that is to be expected. The receiver should obviously know the clock rate and frames per second parameters. The reason why we use RTCP is that RTCP packets are frequently exchanged between the sender and the receiver and they actually contain the actual NTP timestamp for every RTP timestamp. Using this, the receiver can now map RTP timestamps of packets from multiple senders to a common NTP timestamp (this is the reason why we need to sync all the senders to a common NTP clock.)

In order to implement this behavior we use the Gstreaamer’s rtpbin element. We catch streams of all the senders into a common rtpbin for two reasons,

RTP stream packets have a logical timestamp value for every packet and it is observed that the increase in timestamps is constant and fixed based on frame rate and clock frequency. In our case, the increase is 90,000Hz/15fps = 6000. Hence, the receiver can always predict the timestamp of the next packet that is to be expected. The receiver should obviously know the clock rate and frames per second parameters. The reason why we use RTCP is that RTCP packets are frequently exchanged between the sender and the receiver and they actually contain the actual NTP timestamp for every RTP timestamp. Using this, the receiver can now map RTP timestamps of packets from multiple senders to a common NTP timestamp (this is the reason why we need to sync all the senders to a common NTP clock.)

In order to implement this behavior we use the Gstreaamer’s rtpbin element. We catch streams of all the senders into a common rtpbin for two reasons,

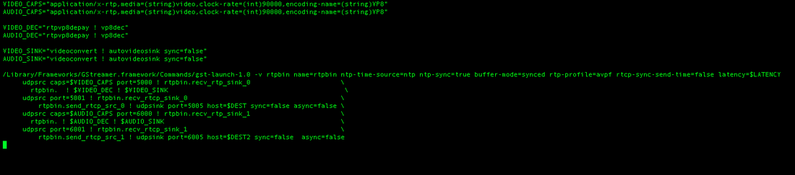

- Rtpbin ensures that the receiver starts functioning only after it starts receiving packets from all the senders which is what we want for indoor localization application.

- Rtpbin actually handles the mapping of RTP timstamps of various streams to the global NTP timestamp. It then assigns this timestamp to the buffer. The packets in the receiver buffer are actually played according to their logical timestamps (Buffer-timestamp). With rtpbin element we can overcome this behavior to make buffer play streams according to the newly assigned NTP timestamps to the packets. Hence if we have two senders whose streams are mapped to common rtpbin, the buffer plays both the streams according to the NTP timestamps. Since both streams have same NTP clock, every packet that is played will belong to the same NTP time in both streams/devices.

Summary:

Step 1 : sync all devices using NTPD daemon to a common clock. In our case we synced clocks of all devices to the receiver so that even if receiver drifts away, it is propagated to all the senders.

Step 2: tell the pipeline to use NTP clock; ntp-time-source=ntp;

rtcp-profile=avpf enables the sender to send RTCP quickly after the start of streaming. Otherwise the first RTCP packet is sent only after 5 seconds. The RTCP packets hold the key to our synchronization.

Step 3: Implement receiver with a common rtpbin and following functionalities.

ntp-time-source=ntp

buffer-mode=synced

ntp-sync=true

rtcp-sync-send-time=false

Note: The rtpbin has its own buffer. So there is no need to implement rtpjitterbuffer in this case.

Streams used: RTP/RTCP

Latency Observed: 0-40ms. <20 ms most of the time which is ideally what we wanted.

With frame rate as 15, one frame approximately takes 1/15=60ms. We are doing much better than that.

Advantages: < 1 frame synchronization, works best for multiple senders, one receiver systems

Disadvantages:

1.NTP clock is not very accurate( there are much more accurate mechanisms like PTP) and it is very difficult to handle fluctuations in RTTs of packets exchanged with NTP clock.

2.Also, the idea of using an external mechanism every time to sync the clocks doesn’t sound efficient.

3.Difficult to implement as gstreamer1.8 is needed which is not available easily in form of a package. GIT master is needed and there all lots of problems with dependencies.

3. Using Netclock and clock-time:

Step 1 : sync all devices using NTPD daemon to a common clock. In our case we synced clocks of all devices to the receiver so that even if receiver drifts away, it is propagated to all the senders.

Step 2: tell the pipeline to use NTP clock; ntp-time-source=ntp;

rtcp-profile=avpf enables the sender to send RTCP quickly after the start of streaming. Otherwise the first RTCP packet is sent only after 5 seconds. The RTCP packets hold the key to our synchronization.

Step 3: Implement receiver with a common rtpbin and following functionalities.

ntp-time-source=ntp

buffer-mode=synced

ntp-sync=true

rtcp-sync-send-time=false

Note: The rtpbin has its own buffer. So there is no need to implement rtpjitterbuffer in this case.

Streams used: RTP/RTCP

Latency Observed: 0-40ms. <20 ms most of the time which is ideally what we wanted.

With frame rate as 15, one frame approximately takes 1/15=60ms. We are doing much better than that.

Advantages: < 1 frame synchronization, works best for multiple senders, one receiver systems

Disadvantages:

1.NTP clock is not very accurate( there are much more accurate mechanisms like PTP) and it is very difficult to handle fluctuations in RTTs of packets exchanged with NTP clock.

2.Also, the idea of using an external mechanism every time to sync the clocks doesn’t sound efficient.

3.Difficult to implement as gstreamer1.8 is needed which is not available easily in form of a package. GIT master is needed and there all lots of problems with dependencies.

3. Using Netclock and clock-time:

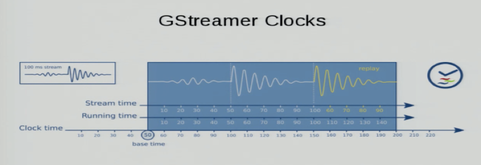

C. Net-Clock

In the third and final method we try to overcome an important disadvantage of the previous method. Netclock is a clock provided by the Gstreamer which is similar to NTP but works internally to set the pipeline clock between sender and a receiver.

After syncing the pipeline clocks, we now use map every RTP logical timestamp to a clock-time timestamp available from RTCP. Note that RTCP’s NTP timestamp can be modified to carry any source’s value. Ntp-time-source does exactly that.

The clock-time is essentially running-time + base-time which is going to be same for all streams once their pipeline clocks are synced. This is therefore a valid substitute to NTP time.

Therefore devices are now synced on clock-time rather than a global NTP time.

In the third and final method we try to overcome an important disadvantage of the previous method. Netclock is a clock provided by the Gstreamer which is similar to NTP but works internally to set the pipeline clock between sender and a receiver.

After syncing the pipeline clocks, we now use map every RTP logical timestamp to a clock-time timestamp available from RTCP. Note that RTCP’s NTP timestamp can be modified to carry any source’s value. Ntp-time-source does exactly that.

The clock-time is essentially running-time + base-time which is going to be same for all streams once their pipeline clocks are synced. This is therefore a valid substitute to NTP time.

Therefore devices are now synced on clock-time rather than a global NTP time.

Summary:

Step 1: Execute Gstreamer’s netclock.c and use system clocks for pipelines at the sender

Step 2: use ntp-time-source=clock-time in sender side.

Step 3: use netclientclock.c in the receiver to sync pipeline clock of the receiver to sender’s pipeline clock.

On the receiver side,

Have a common rtpbin and set the values of following parameters.

ntp-time-source=clock-time

buffer-mode=synced

ntp-sync=true

rtcp-sync-send-time=false

Advantages: Works best for one sender and multiple receivers scenario, even finer degree of sync that NTP

Disadvantages: Involves some hassle when it comes to making multiple devices use the same pipeline clock. The pipeline clocks are set internally. Once you exit the program, the changes are lost.

Step 1: Execute Gstreamer’s netclock.c and use system clocks for pipelines at the sender

Step 2: use ntp-time-source=clock-time in sender side.

Step 3: use netclientclock.c in the receiver to sync pipeline clock of the receiver to sender’s pipeline clock.

On the receiver side,

Have a common rtpbin and set the values of following parameters.

ntp-time-source=clock-time

buffer-mode=synced

ntp-sync=true

rtcp-sync-send-time=false

Advantages: Works best for one sender and multiple receivers scenario, even finer degree of sync that NTP

Disadvantages: Involves some hassle when it comes to making multiple devices use the same pipeline clock. The pipeline clocks are set internally. Once you exit the program, the changes are lost.

Overall summary:

Though we did not get much time to experiment with method 3, between methods 2 and 3, we concluded that method 2 is more ideal for our scenario since there is only one receiver allowed and multiple senders. Syncing all the devices is much more easier and hassle free.

Though we did not get much time to experiment with method 3, between methods 2 and 3, we concluded that method 2 is more ideal for our scenario since there is only one receiver allowed and multiple senders. Syncing all the devices is much more easier and hassle free.